Analysis

Igor has a wide variety of analysis capability. This file discusses analysis other than Curve Fitting, Signal Processing, Analysis of Functions, and Image Processing, which are discussed elsewhere.

Analysis on Multidimensional Waves

Many of the analysis operations in Igor Pro operate on 1D data. However, Igor Pro includes the following capabilities for analysis of multidimensional data:

-

The MatrixOp operation

-

Multidimensional FFT

-

2D and 3D Image Processing operations

-

2D and 3D interpolation operations and functions

Some of these topics are discussed in the Multidimensional Waves and Image Processing sections.

There are many analysis operations that are designed only for 1D data. Multidimensional waves do not appear in dialogs for these operations. If you invoke them on multidimensional waves from the command line or from an Igor procedure, Igor treats the multidimensional waves as if they were 1D. For example, the Histogram operation treat a 2D wave consisting of n rows and m columns as if it were a 1D wave with n*m rows. In some cases (e.g., WaveStats), the operation will be useful. In other cases, it will make no sense at all.

Waveform Versus XY Data

Igor is highly adapted for dealing with waveform data. In a waveform, data values are uniformly spaced in the X dimension. See The Waveform Model of Data.

If your data is uniformly spaced, you tell Igor what the spacing is using the SetScale operation. This is crucial because most of the built-in analysis operations and functions need to know this to work properly.

If your data is not uniformly spaced, you can treat it using XY pairs. This is discussed under The XY Model of Data. Some of the analysis operations and functions in Igor cannot handle XY pairs directly. To use these, you must either make a waveform representation of the XY pair or use Igor procedures that build on the built-in routines.

Converting XY Data to a Waveform

Sometimes the best way to analyze XY data is to make a uniformly-spaced waveform representation of it and analyze that. Most analysis operations are easier with waveform data. Other operations, such as the FFT, can be done only on waveform data. Often your XY data set is nearly uniformly-spaced so a waveform version of it is a very close approximation.

In fact, often XY data imported from other programs has an X wave that is completely unnecessary in Igor because the values in the X wave are actually a simple "series" (values that define a regular intervals, such as 2.2, 2.4, 2.6, 2.8, etc), in which case conversion to a waveform is a simple matter of assigning the correct X scaling to the Y data wave, using SetScale (or the Change Wave Scaling dialog):

SetScale/P x, xWave[0], xWave[1]-xWave[0], yWave

Now the X wave is superfluous and can be discarded:

KillWaves/Z xWave

The XY Pair to Waveform panel can be used to set the Y wave's X scaling when it detects that the X wave contains series data. See Using the XY Pair to Waveform Panel, below.

If your X wave is not a series, then to create a waveform representation of XY data you need to use interpolation. Interpolation creates a waveform from an XY pair by sampling the XY pair at uniform intervals.

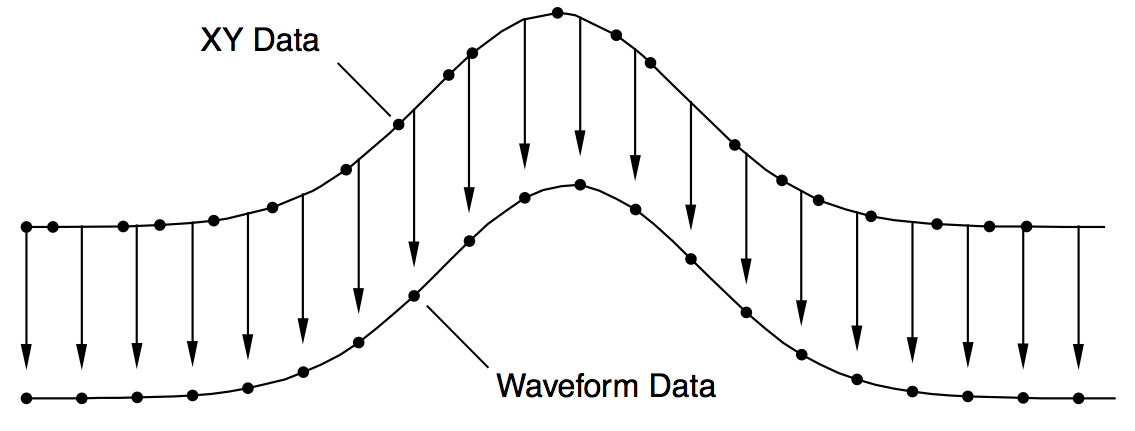

The diagram below shows how the XY pair defining the upper curve is interpolated to compute the uniformly-spaced waveform defining the lower curve. Each arrow indicates an interpolated waveform value:

Igor provides three tools for doing this interpolation: The XY Pair to Waveform panel, the built-in interp function and the Interpolate2 operation. To illustrate these tools we need some sample XY data. The following commands make sample data and display it in a graph:

Make/N=100 xData = .01*x + gnoise(.01)

Make/N=100 yData = 1.5 + 5*exp(-((xData-.5)/.1)^2)

Display yData vs xData

This creates a Gaussian shape. The x wave in our XY pair has some noise in it so the data is not uniformly spaced in the X dimension.

The x data goes roughly from 0 to 1.0 but, because our x data has some noise, it may not be monotonic. This means that, as we go from one point to the next, the x data usually increases but at some points may decrease. We can fix this by sorting the data.

Sort xData, xData, yData

This command uses the xData wave as the sort key and sorts both xData and yData so that xData always increases as we go from one point to the next.

Using the XY Pair to Waveform Panel

The XY Pair to Waveform panel creates a waveform from XY data using the SetScale or Interpolate2 operations, based on an automatic analysis of the X wave's data.

The required steps are:

-

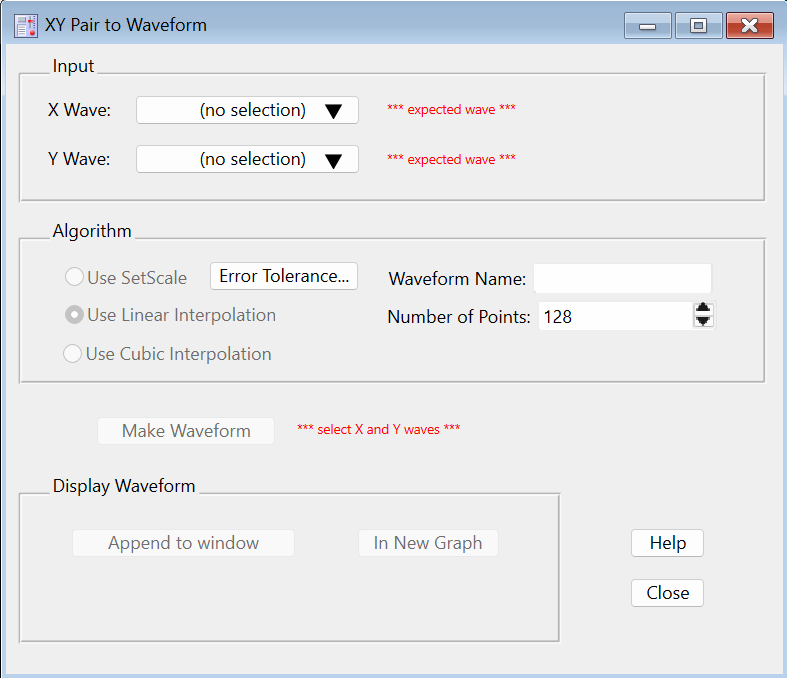

Select XY Pair to Waveform from Igor's Data→Packages submenu. The panel is displayed:

-

Select the X and Y waves (xData and yData) in the popup menus. When this example's xData wave is analyzed it is found to be "not regularly spaced (slope error avg= 0.52...)", which means that SetScale is not appropriate for converting yData into a waveform.

-

Use Interpolate is selected here, so you need a waveform name for the output. Enter any valid wave name.

-

Set the number of output points. Using a number roughly the same as length of the input waves is a good first attempt. You can choose a larger number later if the fidelity to the original is insufficient. A good number depends on how uneven the X values are - use more points for more unevenness.

-

Click Make Waveform.

-

To compare the XY representation of the data with the waveform representation, append the waveform to a graph displaying the XY pair. Make that graph the top graph, then click the

Append to <Name of Graph>button. -

You can revise the Number of Points and click Make Waveform to overwrite the previously created waveform in-place.

Using the Interp Function

We can use the interp function to create a waveform version of our Gaussian. The required steps are:

-

Make a new wave to contain the waveform representation.

-

Use the SetScale operation to define the range of X values in the waveform.

-

Use the interp function to set the data values of the waveform based on the XY data.

Here are the commands:

Duplicate yData, wData

SetScale/I x 0, 1, wData

wData = interp(x, xData, yData)

To compare the waveform representation to the XY representation, we append the waveform to the graph.

AppendToGraph wData

Let's take a closer look at what these commands are doing.

First, we cloned yData and created a new wave, wData. Since we used Duplicate, wData will have the same number of points as yData. We could have made a waveform with a different number of points. To do this, we would use the Make operation instead of Duplicate.

The SetScale operation sets the X scaling of the wData waveform. In this example, we are setting the X values of wData to go from 0 up to and including 1.0. This means that our waveform representation will contain 100 values at uniform intervals in the X dimension from 0 to 1.0.

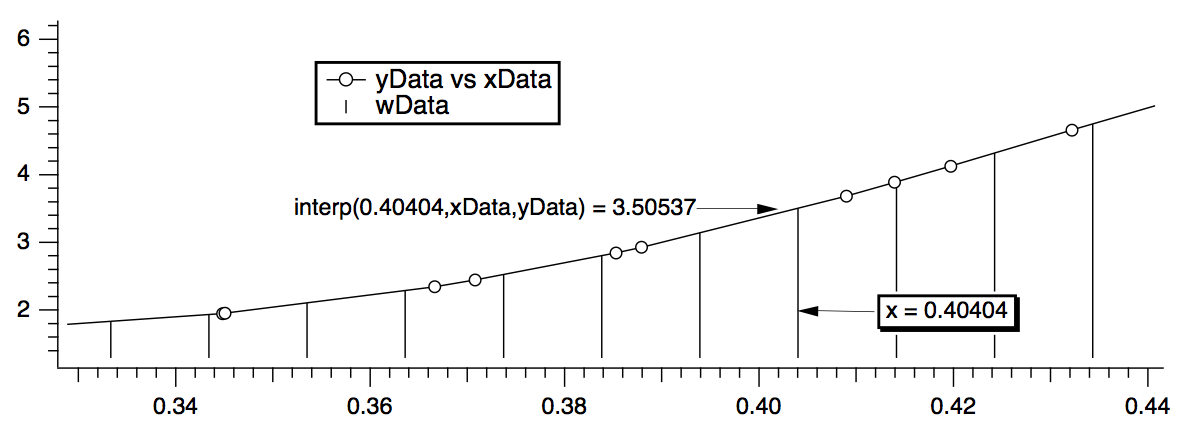

The last step uses a waveform assignment to set the data values of wData. This assignment evaluates the right-hand expression once for each point in wData. For each evaluation, x takes on a different value from 0 to 1.0. The interp function returns the value of the curve yData versus xData at x. For instance, x=.40404 (point number 40 of wData) falls between two points in the XY curve. The interp function linearly interpolates between those values to estimate a data value of 3.50537:

We can wrap these calculations up into an Igor procedure that can create a waveform version of any XY pair.

Function XYToWave1(xWave, yWave, wWaveName, numPoints)

Wave/D xWave // X wave in the XY pair

Wave/D yWave // Y wave in the XY pair

String wWaveName // Name to use for new waveform wave

Variable numPoints // Number of points for waveform

Make/O/N=(numPoints) $wWaveName // Make waveform.

Wave wWave= $wWaveName

WaveStats/Q xWave // Find range of x coords

SetScale/I x V_min, V_max, wWave // Set X scaling for wave

wWave = interp(x, xWave, yWave) // Do the interpolation

End

This function uses the WaveStats operation to find the X range of the XY pair. WaveStats creates the variables V_min and V_max (among others). See Accessing Variables Used by Igor Operations for details.

The function makes the assumption that the input waves are already sorted. We left the sort step out because the sorting would be a side-effect and we prefer that procedures not have nonobvious side effects.

To use the WaveMetrics-supplied XYToWave1 function, include the "XY Pair To Waveform" procedure file. See The Include Statement for instructions on including a procedure file.

If you have blanks (NaNs) in your input data, the interp function will give you blanks in your output waveform as well. The Interpolate2 operation, discussed in the next section, interpolates across gaps in data and does not produce blanks in the output.

Using the Interpolate2 Operation

The Interpolate2 operation provides not only linear but also cubic and smoothing spline interpolation. Furthermore, it does not require the input to be sorted and can automatically make the destination waveform and set its X scaling. It also has a dialog that makes it easy to use interactively.

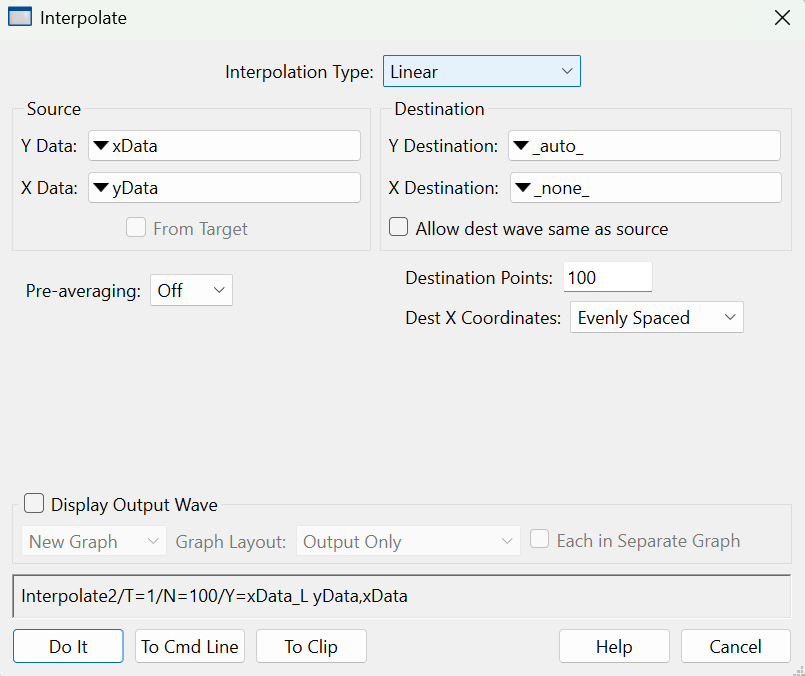

To use it on our sample XY data, choose Analysis→Interpolate and set up the dialog as shown below.

Choosing "_auto_" for Y Destination auto-names the destination wave by appending "_L" to the name of the input "Y data" wave. Choosing "_none_" as the "X destination" tells Interpolate that we want to make a waveform from the input XY pair rather than making a new XY pair.

Here is a rewrite of the XYToWave1 function that uses the Interpolate2 operation rather than the interp function.

Function XYToWave2(xWave, yWave, wWaveName, numPoints)

Wave xWave // X wave in the XY pair

Wave yWave // Y wave in the XY pair

String wWaveName // Name to use for new waveform wave

Variable numPoints // Number of points for waveform

Interpolate2/T=1/N=(numPoints)/Y=$wWaveName xWave, yWave

End

Blanks in the input data are ignored.

For details on Interpolate2, see The Interpolate2 Operation.

Dealing with Missing Values

A missing value is represented in Igor by the value NaN which means "Not a Number". A missing value is also called a "blank", because it appears as a blank cell in a table.

When a NaN is combined arithmetically with any value, the result is NaN. To see this, try the following command:

Print 3+NaN, NaN/5, sin(NaN)

By definition, a NaN is not equal to anything. Consequently, the condition in this statement:

if (myValue == NaN)

is always false.

The workaround is to use the NumType function:

if (NumType(myValue) == 2) // Is it a NaN?

See also NaNs, INFs and Missing Values for more about how NaN values.

Some routines in Igor deal with missing values by ignoring them. CurveFit is one example. Others may produce unexpected results in the presence of missing values. Examples are the FFT operation and the area and mean functions.

Here are some strategies for dealing with missing values.

Replace the Missing Values With Another Value

You can replace NaNs in a wave with this statement:

wave0 = NumType(wave0)==2 ? 0:wave0 // Replace NaNs with zero

If you're not familiar with the :? operator, see ? :.

For multi-dimensional waves you can replace NaNs using MatrixOp. For example:

Make/O/N=(3,3) matNaNTest = p + 10*q

Edit matNaNTest

matNaNTest[0][0] = NaN; matNaNTest[1][1] = NaN; matNaNTest[2][2] = NaN

MatrixOp/O matNaNTest=ReplaceNaNs(matNaNTest,0) // Replace NaNs with 0

Remove the Missing Values

For 1D waves you can remove NaNs using WaveTransform zapNaNs. For example:

Make/N=5 NaNTest = p

Edit NaNTest

NaNTest[1] = NaN; NaNTest[4] = NaN

WaveTransform zapNaNs, NaNTest

There is no built-in operation to remove NaNs from an XY pair if the NaN appears in either the X or Y wave. You can do this, however, using the RemoveNaNsXY procedure in the "Remove Points" WaveMetrics procedure file which you can access through Help→Windows→WM Procedures Index.

There is no operation to remove NaNs from multi-dimensional waves as this would require removing the entire row and entire column where each NaN appeared.

Work Around Gaps in Data

Many analysis routines can work on a subrange of data. In many cases you can just avoid the regions of data that contain missing values. In other cases you can extract a subset of your data, work with it and then perhaps put the modified data back into the original wave.

Here is an example of extract-modify-replace (even though Smooth properly accounts for NaNs):

Make/N=100 data1= sin(P/8)+gnoise(.05); data1[50]= NaN

Display data1

Duplicate/R=[0,49] data1,tmpdata1 // start work on first set

Smooth 5,tmpdata1

data1[0,49]= tmpdata1[P] // put modified data back

Duplicate/O/R=[51,] data1,tmpdata1 // start work on 2nd set

Smooth 5,tmpdata1

data1[51,]= tmpdata1[P-51]

KillWaves tmpdata1

Replace Missing Data with Interpolated Values

You can replace NaN data values prior to performing operations that do not take kindly to NaNs by replacing them with smoothed or interpolated values using Smooth, Loess, or The Interpolate2 Operation.

Replace Missing Data using the Interpolate2 Operation

By using the same number of points for the destination as you have source points, you can replace NaNs without modifying the other data.

If you have waveform data, simply duplicate your data and perform linear interpolation using the same number of points as your data. For example, assuming 100 data points:

Duplicate data1,data1a

Interpolate/T=1/N=100/Y=data1a data1

If you have XY data, the Interpolate2 operation has the ability to include the input x values in the output X wave. For example:

Duplicate data1, yData1, xData1

xData1 = x

Display yData1 vs xData1

Interpolate2/T=1/N=100/I/Y=yData1a/X=xData1a xData1,yData1

If, after performing an operation on your data, you wish to put the modified data back in the source wave while maintaining the original missing values you can use a wave assignment similar to this:

yData1 = (numtype(yData1) == 0) ? yData1 : yData1a

This technique can also be applied using interpolated results generated by the Loess and Smooth operations.

Replace Missing Data using Median Smoothing

You can use the Smooth dialog to replace each NaN with the median of surrounding values.

Select the Median smoothing algorithm, select "NaNs" from the Replace popup, and choose "Median" for the "with:" radio button. Enter the number of surrounding points used to compute the median (an odd number is best).

You can choose to overwrite the NaNs or create a new waveform with the result. The Smooth dialog produces commands like this:

Duplicate/O data1,data1_smth;DelayUpdate

Smooth/M=(NaN) 5, data1_smth

Interpolation

Igor Pro has a number of interpolation tools that are designed for different applications. We summarize these in the table below.

| Data | Operation/Function | Interpolation Method |

|---|---|---|

| 1D waves | Wave assignment, e.g., val=wave(x) | Linear |

| 1D waves | Smooth | Running median, average, binomial, Savitsky-Golay |

| 1D XY waves | interp | Linear |

| 1D single or XY waves | The Interpolate2 Operation | Linear, cubic spline, smoothing spline |

| 1D or 2D single or XY | Loess | Locally-weighted regression |

| Triplet XYZ waves | ImageInterpolate | Voronoi |

| 1D X, Y, Z waves | Data→Packages→XYZ to Matrix | Voronoi |

| 1D X, Y, Z waves | Loess | Locally-weighted regression |

| 2D waves | ImageInterpolate | Bilinear, splines, Kriging, Voronoi |

| 2D waves | Interp2D | Bilinear |

| 2D waves | SphericalInterpolate | Voronoi |

| 3D waves | Interp3d | extractSurface, Trilinear |

| Interp3DPath | extractSurface, Trilinear | |

| ImageTransform | extractSurface, Trilinear | |

| 3D scatter data | Interpolate3D | Barycentric |

| 4D waves | Interp4D | 4D Linear |

| Interp4dPath | 4D Linear |

All the interpolation methods in this table consist of two common steps. The first step involves the identification of data points that are nearest to the interpolation location and the second step is the computation of the interpolated value using the neighboring values and their relative proximity. You can find the specific details in the documentation of the individual operation or function.

Interpolation Demo

Open Smooth Curve Through Noise Demo

The Interpolate2 Operation

The Interpolate2 operation performs linear, cubic spline and smoothing cubic spline interpolation on 1D waveform and XY data. The cubic spline interpolation is based on a routine in "Numerical Recipes in C". The smoothing spline is based on "Smoothing by Spline Functions", Christian H. Reinsch, Numerische Mathematic 10, 177-183 (1967).

Prior to Igor 7, Interpolate2 was implemented as part of the Interpolate XOP. It is now built-in.

The Interpolate XOP also implemented an older operation named Interpolate which used slightly different syntax. If you are using the Interpolate operation, we recommend that you convert to using Interpolate2.

The main use for linear interpolation is to convert an XY pair of waves into a single wave containing Y values at evenly spaced X values so that you can use Igor operations, like FFT, which require evenly spaced data.

Cubic spline interpolation is most useful for putting a pleasingly smooth curve through arbitrary XY data. The resulting curve may contain features that have nothing to do with the original data so you should be wary of using the cubic spline for analytic rather than esthetic purposes.

Both linear and cubic spline interpolation are constrained to put the output curve through all of the input points and thus work best with a small number of input points. The smoothing spline does not have this constraint and thus works well with large, noisy data sets.

The Interpolate2 operation has a feature called "pre-averaging" which can be used when you have a large number of input points. Pre-averaging was added to Interpolate2 as a way to put a cubic spline through a large, noisy data set before it supported the smoothing spline. We now recommend that you try the smoothing spline instead of pre-averaging.

The Smooth Curve Through Noise example experiment illustrates spline interpolation.

Open Smooth Curve Through Noise Demo

Spline Interpolation Example

Before going into a complete discussion of Interpolate2, let's look at a simple example first.

You can execute the commands in this example by selecting them and pressing control-return or control-enter.

First, make some sample XY data using the following commands:

Make/N=10 xData, yData // Make source data

xData = p; yData = 3 + 4*xData + gnoise(2) // Create sample data

Display yData vs xData // Make a graph

Modify mode=2, lsize=3 // Display source data as dots

Now, choose Analysis→Interpolate to invoke the Interpolate dialog.

Set Interpolation Type to Cubic Spline.

Choose yData from the Y Data pop-up menu.

Choose xData from the X Data pop-up menu.

Choose _auto_ from the Y Destination pop-up menu.

Choose _none_ from the X Destination pop-up menu.

Enter 200 in the Destination Points box.

Choose Off from the Pre-averaging pop-up menu.

Choose Evenly Spaced from the Dest X Coords pop-up menu.

Click Natural in the End Points section.

Notice that the dialog has generated the following command:

Interpolate2/T=2/N=200/E=2/Y=yData_CS xData, yData

This says that Interpolate2 will use yData as the Y source wave, xData as the X source wave and that it will create yData_CS as the destination wave. _CS means "cubic spline".

Click the Do It button to do the interpolation.

Now add the yData_CS destination wave to the graph using the command:

AppendToGraph yData_CS; Modify rgb(yData_CS)=(0,0,65535)

Now let's try this with a larger number of input data points. Execute:

Redimension/N=500 xData, yData

xData = p/50; yData = 10*sin(xData) + gnoise(1.0) // Create sample data

Modify lsize(yData)=1 // Smaller dots

Now choose Analysis→Interpolate to invoke the Interpolate dialog. All the settings should be as you left them from the preceding exercise. Click the Do It button.

Notice that the resulting cubic spline attempts to go through all of the input data points. This is usually not what we want.

Now, choose Analysis→Interpolate again and make the following changes:

Choose Smoothing Spline from the Interpolation Type pop-up menu. Notice the command generated now references a wave named yData_SS. This will be the output wave.

Enter 1.0 for the smoothing factor. This is usually a good value to start from.

In the Standard Deviation section, click the Constant radio button and enter 1.0 as the standard deviation value. 1.0 is correct because we know that our data has noise with a standard deviation of 1.0, as a result of the "gnoise(1.0)" term above.

Click the Do It button to do the interpolation. Append the yData_SS destination wave to the graph using the command:

AppendToGraph yData_SS; Modify rgb(yData_SS)=(0,0,0)

Notice that the smoothing spline adds a pleasing curve through the large, noisy data set. If necessary, enlarge the graph window so you can see this.

You can tweak the smoothing spline using either the smoothing factor parameter or the standard deviation parameter. If you are unsure of the standard deviation of the noise, leave the smoothing factor set to 1.0 and try different standard deviation values. It is usually not too hard to find a reasonable value.

The Interpolate Dialog

Choosing Analysis→Interpolate summons the Interpolate dialog from which you can choose the desired type of interpolation, the source wave or waves, the destination wave or waves, and the number of points in the destination waves. This dialog generates an Interpolate2 command which you can execute, copy to the clipboard or copy to the command line.

From the Interpolation Type pop-up menu, choose Linear or Cubic Spline or Smoothing Spline. Cubic spline is good for a small input data set. Smoothing spline is good for a large, noisy input data set.

If you choose Cubic Spline, a Pre-averaging pop-up menu appear. The pre-averaging feature is largely no longer needed and is not recommended. Use the smoothing spline instead of the cubic spline with pre-averaging.

If you choose smoothing spline, a Smoothing Factor item and Standard Deviant controls appear. Usually it is best to set the smoothing factor to 1.0 and use the constant mode for setting the standard deviation. You then need to enter an estimate for the standard deviation of the noise in your Y data. Then try different values for the standard deviation until you get a satisfactory smooth spline through your data. See Smoothing Spline Parameters for further details.

From the Y Data and X Data pop-up menus, choose the source waves that define the data through which you want to interpolate. If you choose a wave from the X Data pop-up, Interpolate2 uses the XY curve defined by the contents of the Y data wave versus the contents of the X data wave as the source data. If you choose _calculated_ from the X Data pop-up, it uses the X and Y values of the Y data wave as the source data.

If you click the From Target checkbox, the source and destination pop-up menus show only waves in the target graph or table.

The X and Y data waves must have the same number of points. They do not need to have the same data type.

The X and Y source data does not need to be sorted before using Interpolate2. If necessary, Interpolate2 sorts a copy of the input data before doing the interpolation.

NaNs (missing values) and INFs (infinite values) in the source data are ignored. Any point whose X or Y value is NaN or INF is treated as if it did not exist.

Enter the number of points you want in the destination waves in the Destination Points box. 200 points is usually good.

From the Y Destination and X Destination pop-up menus, choose the waves to contain the result of the interpolation. For most cases, choose _auto_ for the Y destination wave and _none_ for the X destination wave. This gives you an output waveform. Other options, useful for less common applications, are described in the following paragraphs.

If you choose _auto_ from the Y Destination pop-up, Interpolate2 puts the Y output data into a wave whose name is derived by adding a suffix to the name of the Y data wave. The suffix is "_L" for linear interpolation, "_CS" for cubic spline interpolation and "_SS" for smoothing spline interpolation. For example, if the Y data wave is called "yData", then the default Y destination wave will be called "yData_L", "yData_CS" or "yData_SS".

If you choose _none_ for the X destination wave, Interpolate2 puts the Y output data in the Y destination wave and sets the X scaling of the Y destination wave to represent the X output data.

If you choose _auto_ from the X Destination pop-up, Interpolate2 puts the X output data into a wave whose name is derived by adding the appropriate suffix to the name of the X data wave. If the X data wave is "xData" then the X destination wave will be "xData_L", "xData_CS" or "xData_SS". If there is no X data wave then the X destination wave name is derived by adding the letter "x" to the name of the Y destination wave. For example, if the Y destination is "yData_CS" then the X destination wave will be "yData_CSx".

For both the X and the Y destination waves, if the wave already exists, Interpolate2 overwrites it. If it does not already exist, Interpolate2 creates it.

The destination waves will be double-precision unless they already exist when Interpolate2 is invoked. In this case, Interpolate2 leaves single-precision destination waves as single-precision. For any other precision, Interpolate2 changes the destination wave to double-precision.

The Dest X Coords pop-up menu gives you control over the X locations at which the interpolation is done. Usually you should choose Evenly Spaced. This generates interpolated values at even intervals over the range of X input values.

The Evenly Spaced Plus Input X Coords setting is the same as Evenly Spaced except that Interpolate2 makes sure that the output X values include all of the input X values. This is usually not necessary. This mode is not available if you choose _none_ for your X destination wave.

The Log Spaced setting makes the output evenly spaced on a log axis. This mode ignores any non-positive values in your input X data. It is not available if you choose _none_ for your X destination wave. See Interpolating Exponential Data for an alternative.

The From Dest Wave setting takes the output X values from the X coordinates of the destination wave. The Destination Points setting is ignored. You could use this, for example, to get a spline through a subset of your input data. You must create your destination waves before doing the interpolation for this mode. If your destination is a waveform, use the SetScale operation to define the X values of the waveform. Interpolate2 will calculate its output at these X values. If your destination is an XY pair, set the values of the X destination wave. Interpolate2 will create a sorted version of these values and will then calculate its output at these values. If the X destination wave was originally reverse-sorted, Interpolate2 will reverse the output.

The End Points radio buttons apply only to the cubic spline. They control the destination waves in the first and last intervals of the source wave. Natural forces the second derivative of the spline to zero at the first and last points of the destination waves. Match 1st Derivative forces the slope of the spline to match the straight lines drawn between the first and second input points, and between the last and next-to-last input points. In most cases it doesn't much matter which of these alternatives you use.

Interpolating Exponential Data

It is common to plot data that spans orders of magnitude, such as data arising from exponential processes, versus log axes. To create an interpolated data set from such data, it is often best to interpolate the log of the original data rather than the original data itself.

The Interpolate2 Log Demo experiment demonstrates how to do such interpolation. Here is a snippet from that demo experiment:

Function DoLogYInterpolate2(xInput, yInput, numOutputPoints, xOutput, yOutput)

Wave xInput, yInput

int numOutputPoints

Wave& xOutput // Output wave reference

Wave& yOutput // Output wave reference

// Create log(yInput) to use in interpolation

Duplicate/FREE yInput, logYInput // Free wave is automatically killed when function exits

logYInput = log(yInput)

String xOutName = NameOfWave(xInput) + "_interp"

String yOutName = NameOfWave(yInput) + "_interp"

Interpolate2 /T=1 /I=0 /N=(numOutputPoints) /X=$xOutName /Y=$yOutName xInput, logYInput

WAVE xOutput = $xOutName

WAVE yOutput = $yOutName

// yOutput was set based on log(yInput). Transform it back to original scale.

yOutput = 10^yOutput

End

Smoothing Spline Algorithm

The smoothing spline algorithm is based on "Smoothing by Spline Functions", Christian H. Reinsch, Numerische Mathematik 10. It minimizes

among all functions g(x) such that

where g(xi) is the value of the smooth spline at a given point, yi is the Y data at that point, σi is the standard deviation of that point, and S is the smoothing factor.

Smoothing Spline Parameters

The smoothing spline operation requires a standard deviation parameter and a smoothing factor parameter. The standard deviation parameter should be a good estimate of the standard deviation of the noise in your Y data. The smoothing factor should nominally be close to 1.0, assuming that you have an accurate standard deviation estimate.

Using the Standard Deviation section of the Interpolate dialog, you can choose one of three options for the standard deviation parameter: None, Constant, From Wave.

If you choose None, Interpolate2 uses an arbitrary standard deviation estimate of 0.05 times the amplitude of your Y data. You can then play with the smoothing factor parameter until you get a pleasing smooth spline. Start with a smoothing factor of 1.0. This method is not recommended.

If you choose Constant, you can then enter your estimate for the standard deviation of the noise and Interpolate2 uses this value as the standard deviation of each point in your Y data. If your estimate is good, then a smoothing factor around 1.0 will give you a nice smooth curve through your data. If your initial attempt is not quite right, you should leave the smoothing factor at 1.0 and try another estimate for the standard deviation. For most types of data, this is the preferred method.

If you choose From Wave then Interpolate2 expects that each point in the specified wave contains the estimated standard deviation for the corresponding point in the Y data. You should use this method if you have an appropriate wave.

Interpolate2's Pre-averaging Feature

A linear or cubic spline interpolation goes through all of the input data points. If you have a large, noisy data set, this is probably not what you want. Instead, use the smoothing spline.

Before Interpolate2 had a smoothing spline, we recommended that you use the cubic spline to interpolate through a decimated version of your input data. The pre-averaging feature was designed to make this easy.

Because Interpolate2 now supports the smoothing spline, the pre-averaging feature is no longer necessary. However, we still support it for backward compatibility.

When you turn pre-averaging on, Interpolate2 creates a temporary copy of your input data and reduces it by decimation to a smaller number of points, called nodes. Interpolate2 then usually adds nodes at the very start and very end of the data. Finally, it does an interpolation through these nodes.

Identical Or Nearly Identical X Values

This section discusses a degenerate case that is of no concern to most users.

Input data that contains two points with identical X values can cause interpolation algorithms to produce unexpected results. To avoid this, if Interpolate2 encounters two or more input data points with nearly identical X values, it averages them into one value before doing the interpolation. This behavior is separate from the pre-averaging feature. This is done for the cubic and smoothing splines except when the Dest X Coords mode is is Log Spaced or From Dest Wave. It is not done for linear interpolation.

Two points are considered nearly identical in X if the difference in X between them (dx) is less than 0.01 times the nominal dx. The nominal dx is computed as the X span of the input data divided by the number of input data points.

Destination X Coordinates from Destination Wave

This mode, which we call "X From Dest" mode for short, takes effect if you choose From Dest Wave from the Dest X Coords pop-up menu in the Interpolate dialog or use the Interpolate2 /I=3 flag. In this mode the number of output points is determined by the destination wave and the /N flag is ignored.

In X From Dest mode, the points at which the interpolation is done are determined by the destination wave. The destination may be a waveform, in which case the interpolation is done at its X values. Alternatively the destination may be an XY pair in which case the interpolation is done at the data values stored in the X destination wave.

Here is an example using a waveform as the destination:

Make /O /N=20 wave0 // Generate source data

SetScale x 0, 2*PI, wave0

wave0 = sin(x) + gnoise(.1)

Display wave0

ModifyGraph mode=3

Make /O /N=1000 dest0 // Generate dest waveform

SetScale x 0, 2*PI, dest0

AppendToGraph dest0

ModifyGraph rgb(dest0)=(0,0,65535)

Interpolate2 /T=2 /I=3 /Y=dest0 wave0 // Do cubic spline interpolation

If your destination is an XY pair, Interpolate2 creates a sorted version of these values and then calculates its output at the X destination wave's values. If the X destination wave was originally reverse-sorted, as determined by examining its first and last values, Interpolate2 reverses the output after doing the interpolation to restore the original ordering.

In X From Dest mode with an XY pair as the destination, the X wave may contain NaNs. In this case, Interpolate2 creates an internal copy of the XY pair, removes the points where the X destination is NaN, does the interpolation, and copies the results to the destination XY pair. During this last step, Interpolate2 restores the NaNs to their original locations in the X destination wave. Here is an example of an XY destination with a NaN in the X destination wave:

Make/O xData={1,2,3,4,5}, yData={1,2,3,4,5} // Generate source data

Display yData vs xData

Make/O xDest={1,2,NaN,4,5}, yDest={0,0,0,0,0} // Generate dest XY pair

ModifyGraph mode=3,marker=19

AppendToGraph yDest vs xDest

ModifyGraph rgb(yDest)=(0,0,65535)

Interpolate2 /T=1 /I=3 /Y=yDest /X=xDest xData, yData // Do linear interpolation

Differentiation and Integration

The Differentiate and Integrate operations provide a number of algorithms for operation on one-dimensional waveform and XY data. These operations can either replace the original data or create a new wave with the results. The easiest way to use these operations is via dialogs available from the Analysis menu.

For most applications, trapezoidal integration and central differences differentiation are appropriate methods. However, when operating on XY data, the different algorithms have different requirements for the number of points in the X wave. If your X wave does not show up in the dialog, try choosing a different algorithm or click the help button to see what the requirements are.

When operating on waveform data, X scaling is taken into account; you can turn this off using the /P flag. You can use the SetScale operation to define the X scaling of your Y data wave.

Although these operations work along just one dimension, they can be targeted to operate along rows or columns of a matrix (or even higher dimensions) using the /DIM flag.

The Integrate operation replaces or creates a wave with the numerical integral. For finding the area under a curve, see Areas and Means.

Areas and Means

You can compute the area and mean value of a wave in several ways using Igor.

Perhaps the simplest way to compute a mean value is with the Wave Stats dialog in the Statistics menu. You select the wave, type in the X range (or use the current cursor positions), click Do It, and Igor prints several statistical results to the history area. Among them is V_avg, which is the average, or mean value. This is the same value that is returned by Igor's mean function, which is faster because it doesn't compute any other statistics. The mean function returns NaN if any data within the specified range is NaN. The WaveStats operation, on the other hand, ignores such missing values.

WaveStats and the mean function use the same method for computing the mean: find the waveform values within the given X range, sum them together, and divide by the number of values. The X range serves only to select the values to combine. The range is rounded to the nearest point numbers.

If you consider your data to describe discrete values, such as a count of events, then you should use either WaveStats or the mean function to compute the average value. You can most easily compute the total number of events, which is an area of sorts, using the sum function. It can also be done easily by multiplying the WaveStats outputs V_avg and V_npnts.

If your data is a sampled representation of a continuous process such as a sampled audio signal, you should use the faverage function to compute the mean, and the area function to compute the area. These two functions use the same linear interpolation scheme as does trapezoidal integration to estimate the waveform values between data points. The X range is not rounded to the nearest point. Partial X intervals are included in the calculation through this linear interpolation.

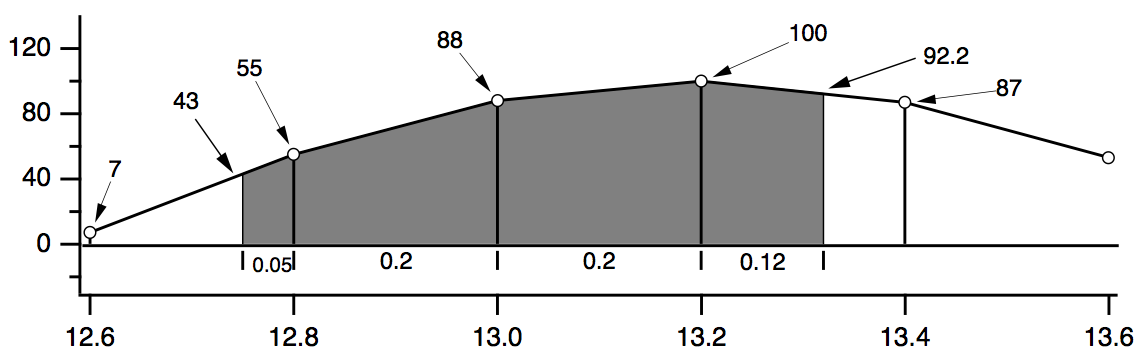

The diagram below shows the calculations each function performs for the data shown. The two values 43 and 92.2 are linear interpolation estimates. The diagram compares the area, faverage, and mean functions over the interval (12.75,13.32).

WaveStats/R=(12.75,13.32) wave

V_avg = (55+88+100+87)/4 = 82.5

mean(wave,12.75,13.32)

= (55+88+100+87)/4 = 82.5

area(wave,12.75,13.32) =

0.05·(43+55)/2 // first trapezoid

+ 0.20·(55+88)/2 // second trapezoid

+ 0.20·(88+100)/2 // third trapezoid

+ 0.12·(100+92.2)/2 // fourth trapezoid

= 47.082

faverage(wave,12.75,13.32) = area(wave,12.75,13.32) / (13.32-12.75)

= 47.082/0.57 = 82.6

Note that only the area function is affected by the X scaling of the wave. faverage eliminates the effect of X scaling by dividing the area by the same X range that area multiplied by.

One problem with these functions is that they cannot be used if the given range of data has missing values (NaNs). See Dealing with Missing Values for details.

X Ranges and the Mean, faverage, and area Functions

The X range input for the mean, faverage and area functions are optional. Thus, to include the entire wave you don't have to specify the range:

Make/N=10 wave=2; Edit wave.xy // X ranges from 0 to 9

Print area(wave) // entire X range, and no more

18

Sometimes, in programming, it is not convenient to determine whether a range is beyond the ends of a wave. Fortunately, these functions also accept X ranges that go beyond the ends of the wave.

Print area(wave, 0, 9) // entire X range, and no more

18

You can use expressions that evaluate to a range beyond the ends of the wave:

Print leftx(wave),rightx(wave)

0 10

Print area(wave,leftx(wave),rightx(wave)) // entire X range, and more

18

or even an X range of ±∞:

Print area(wave, -Inf, Inf) // entire X range of the universe

18

Finding the Mean of Segments of a Wave

Under Analysis Programming is a function that finds the mean of segments of a wave where you specify the length of the segments. It creates a new wave to contain the means for each segment.

Area for XY Data

To compute the area of a region of data contained in an XY pair of waves, use the areaXY function. There is also an XY version of the faverage function; see faverageXY .

In addition you can use the AreaXYBetweenCursors WaveMetrics procedure file which contains the AreaXYBetweenCursors and AreaXYBetweenCursorsLessBase procedures. For instructions on loading the procedure file, see WaveMetrics Procedures Folder. Use the Info Panel and Cursors to delimit the X range over which to compute the area. AreaXYBetweenCursorsLessBase removes a simple trapezoidal baseline - the straight line between cursors.

Wave Statistics

The WaveStats operation computes various descriptive statistics relating to a wave and prints them in the history area of the command window. It also stores the statistics in a series of special variables or in a wave so you can access them from a procedure.

The statistics printed and the corresponding special variables are:

| V_npnts | Number of points in range excluding points whose value is NaN or INF. | |

| V_numNans | Number of NaNs. | |

| V_numINFs | Number of INFs. | |

| V_avg | Average of data values. | |

| V_sum | Sum of data values. | |

| V_sdev | Standard deviation of data values, | |

| "Variance" is V_sdev2. | ||

| V_sem | Standard error of the mean | |

| V_rms | RMS of Y values | |

| V_adev | Average deviation | |

| V_skew | Skewness | |

| V_kurt | Kurtosis | |

| V_minloc | X location of minimum data value. | |

| V_min | Minimum data value. | |

| V_maxloc | X location of maximum data value. | |

| V_max | Maximum data value. | |

| V_minRowLoc | Row containing minimum data value. | |

| V_maxRowLoc | Row containing maximum data value. | |

| V_minColLoc | Column containing minimum data value (2D or higher waves). | |

| V_maxColLoc | Column containing maximum data value (2D or higher waves). | |

| V_minLayerLoc | Layer containing minimum data value (3D or higher waves). | |

| V_maxLayerLoc | Layer containing maximum data value (3D or higher waves). | |

| V_minChunkLoc | Chunk containing minimum data value (4D waves only). | |

| V_maxChunkLoc | Chunk containing maximum data value (4D waves only). | |

| V_startRow | The unscaled index of the first row included in calculating statistics. | |

| V_endRow | The unscaled index of the last row included in calculating statistics. | |

| V_startCol | The unscaled index of the first column included in calculating statistics. Set only when /RMD is used. | |

| V_endCol | The unscaled index of the last column included in calculating statistics. Set only when /RMD is used. | |

| V_startLayer | The unscaled index of the first layer included in calculating statistics. Set only when /RMD is used. | |

| V_endLayer | The unscaled index of the last layer included in calculating statistics. Set only when /RMD is used. | |

| V_startChunk | The unscaled index of the first chunk included in calculating statistics. Set only when /RMD is used. | |

| V_endChunk | The unscaled index of the last chunk included in calculating statistics. Set only when /RMD is used. | |

To use the WaveStats operation, choose Wave Stats from the Statistics menu.

Igor ignores NaNs and INFs in computing the average, standard deviation, RMS, minimum and maximum. NaNs result from computations that have no defined mathematical meaning. They can also be used to represent missing values. INFs result from mathematical operations that have no finite value.

This procedure illustrates the use of WaveStats. It shows the average and standard deviation of a source wave, assumed to be displayed in the top graph. It draws lines to indicate the average and standard deviation.

Function ShowAvgStdDev(source)

Wave source // source waveform

Variable left=leftx(source),right=rightx(source) // source X range

WaveStats/Q source

SetDrawLayer/K ProgFront

SetDrawEnv xcoord=bottom,ycoord=left,dash= 7

DrawLine left, V_avg, right, V_avg // show average

SetDrawEnv xcoord=bottom,ycoord=left,dash= 7

DrawLine left, V_avg+V_sdev, right, V_avg+V_sdev // show +std dev

SetDrawEnv xcoord=bottom,ycoord=left,dash= 7

DrawLine left, V_avg-V_sdev, right, V_avg-V_sdev // show -std dev

SetDrawLayer UserFront

End

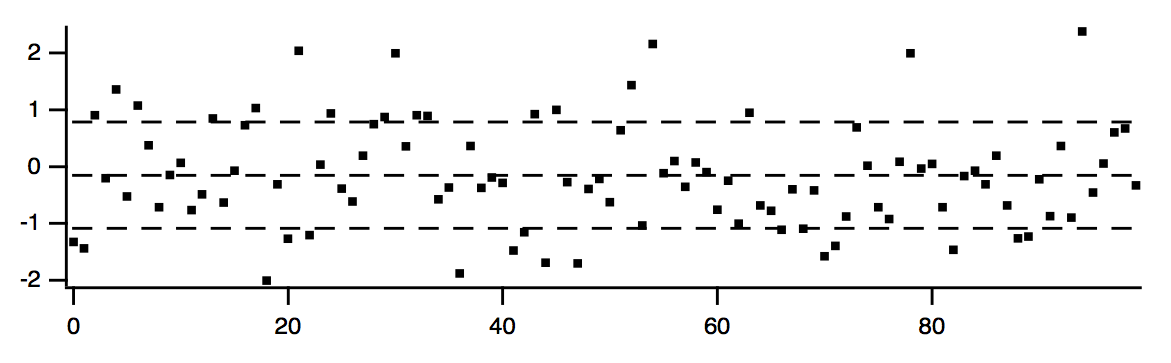

You could try this function using the following commands.

Make/N=100 wave0 = gnoise(1)

Display wave0; ModifyGraph mode(wave0)=2, lsize(wave0)=3

ShowAvgStdDev(wave0)

When you use WaveStats with a complex wave, you can choose to compute the same statistics as above for the real, imaginary, magnitude and phase of the wave. By default WaveStats only computes the statistics for the real part of the wave. When computing the statistics for other components, the operation stores the results in a multidimensional wave M_WaveStats.

If you are working with large amounts of data and you are concerned about computation speed you might be able to take advantage of the /M flag that limits the calculation to the first order moments.

If you are working with 2D or 3D waves and you want to compute the statistics for a domain of an arbitrary shape you should use the ImageStats operation with an ROI wave.

Histograms

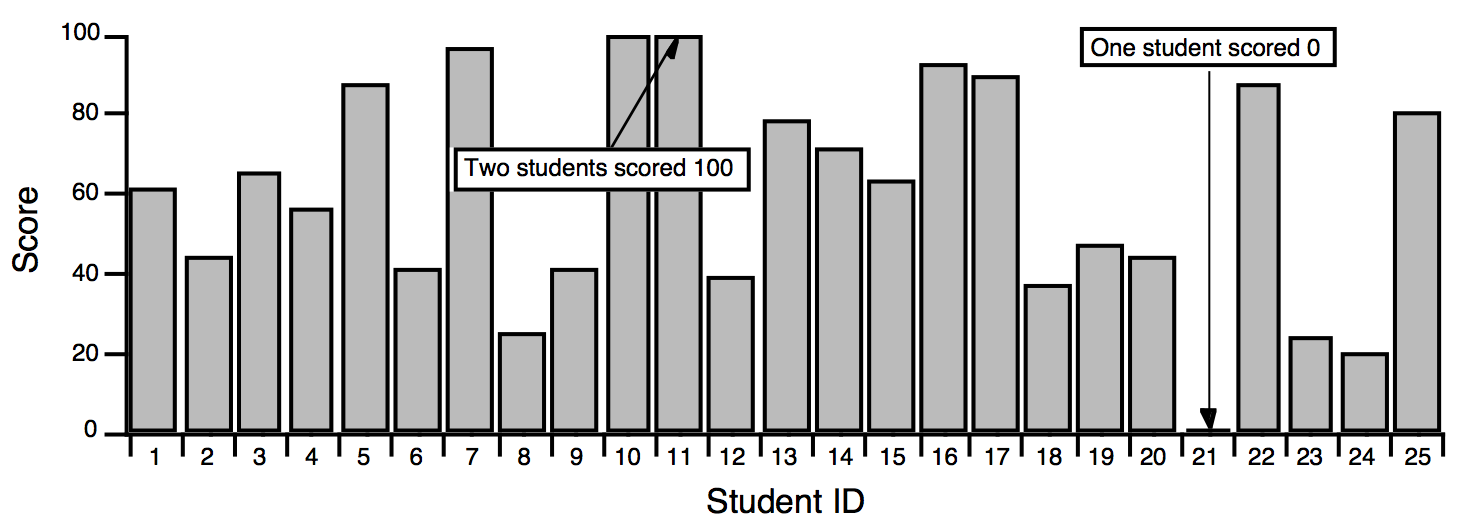

A histogram totals the number of input values that fall within each of a number of value ranges (or "bins") usually of equal extent. For example, a histogram is useful for counting how many data values fall in each range of 0-10, 10-20, 20-30, etc. This calculation is often made to show how students performed on a test:

The usual use for a histogram in this case is to figure out how many students fall into certain numerical ranges, usually the ranges associated with grades A, B, C, and D. Suppose the teacher decides to divide the 0-100 range into 4 equal parts, one per grade. The Histogram operation can be used to show how many students get each grade by counting how many students fall in each of the 4 ranges.

We start by creating a wave to hold the histogram output:

Make/O/D/N=4 studentsWithGrade

Next we execute the Histogram command which we generated using the Histogram dialog:

Histogram/B={0,25,4} scores,studentsWithGrade

The /B flag tells Histogram to create four bins, starting from 0 with a bin width of 25. The first bin counts values from 0 up to but not including 25.

The Histogram operation analyzes the source wave (scores), and puts the histogram result into a destination wave (studentsWithGrade).

Let's create a text wave of grades to plot studentsWithGrade versus a grade letter in a category plot:

Make/O/T grades = {"D", "C", "B", "A"}

Display studentsWithGrade vs grades

SetAxis/A/E=1 left

Everything looks good in the category plot. Let's double-check that all the students made it into the bins:

Print sum(studentsWithGrade)

23

There are two missing students. They are ones who scored 100 on the test. The four bins we defined are actually:

Bin 1: 0 - 24.99999

Bin 2: 25 - 49.99999

Bin 3: 50 - 74.99999

Bin 4: 75 - 99.99999

The problem is that the test scores actually encompass 101 values, not 100. To include the perfect scores in the last bin, we could add a small number such as 0.001 to the bin width:

Bin 1: 0 - 25.00999

Bin 2: 25.001 - 49.00199

Bin 3: 50.002 - 74.00299

Bin 4: 75.003 - 100.0399

The students who scored 25, 50 or 75 would be moved down one grade, however. Perhaps the best solution is to add another bin for perfect scores:

Make/O/T grades= {"D", "C", "B", "A", "A+"}

Histogram/B={0,25,5} scores,studentsWithGrade

For information on plotting a histogram, see Category Plots and Graphing Histogram Results.

This example was intended to point out the care needed when choosing the histogram binning. Our example used "manual binning".

The Histogram operation provides five ways to set binning. They correspond to the Destination Bins radio buttons in the Histogram dialog:

| Bin Mode | What It Does |

|---|---|

| Manual bins | Sets number of points and X scaling of destination wave based on parameters that you explicitly specify. |

| Auto-set bin range | Sets X scaling of destination wave to cover the range of values in the source wave. |

| Does not change the number of points (bins) in the destination wave. Thus, you need to set the number of points yourself before computing the histogram. | |

| When using the Histogram dialog, if you select Make New Wave or Auto from the Output Wave menu, the dialog must be told how many points the new wave should have. It displays the Number of Bins box to let you specify the number. | |

| Set bins from destination wave | Does not change X scaling or number of points in the destination wave. Thus, you need to set X scaling and number of points yourself before computing the histogram. |

| When using the Histogram dialog, the Set from destination wave radio button is only available if you choose Select Existing Wave from the Output Wave menu. | |

| Auto-set bins: 1+log2(N) | Examines the input data and sets the number of bins based on the number of input data points. Sets the bin range the same as if Auto-set bin range were selected. |

| Auto-set bins: 3.49 * Sdev * N^-1/3 | Examines the input data and sets the number of bins based on the number of input data points and the standard deviation of the data. Sets the bin range the same as if Auto-set bin range were selected. |

| Freedman-Diaconis method | Sets the optimal bin width to binWidth = 2 * IQR * N-1/3 where IQR is the interquartile distance (see StatsQuantiles) and the bins are evenly-distributed between the minimum and maximum values. Added in Igor Pro 7.00. |

Histogram Caveats

If you use the "Set bins from destination wave" bin mode, you must create the destination wave, using the Make operation, before computing the histogram. You also must set the X scaling of the destination wave, using the SetScale operation.

The Histogram operation does not distinguish between 1D waves and multidimensional waves. If you use a multidimensional wave as the source wave, it will be analyzed as if the wave were one dimensional. This may still be useful - you will get a histogram showing counts of the data values from the source wave as they fall into bins.

If you would like to perform a histogram of 2D or 3D image data, you may want to use the ImageHistogram operation, which supports specific features that apply to images only.

Graphing Histogram Results

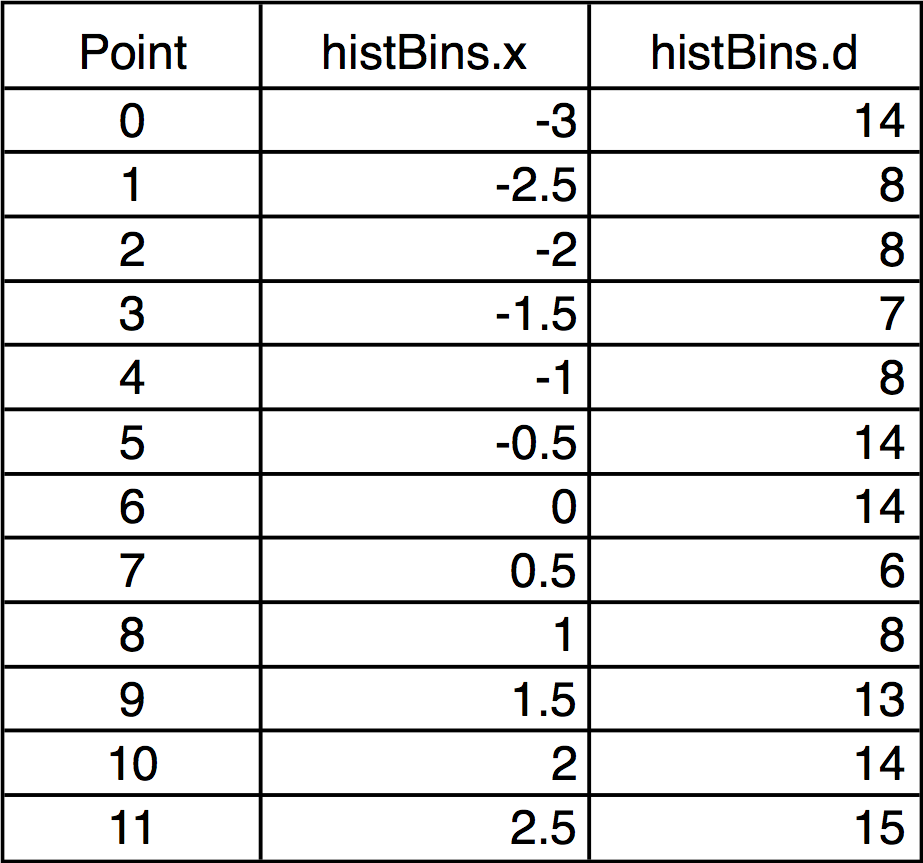

Our example above displayed the histogram results as a category plot because the bins corresponded to text values. Often histogram bins are displayed on a numeric axis. In this case you need to know how Igor displays a histogram result.

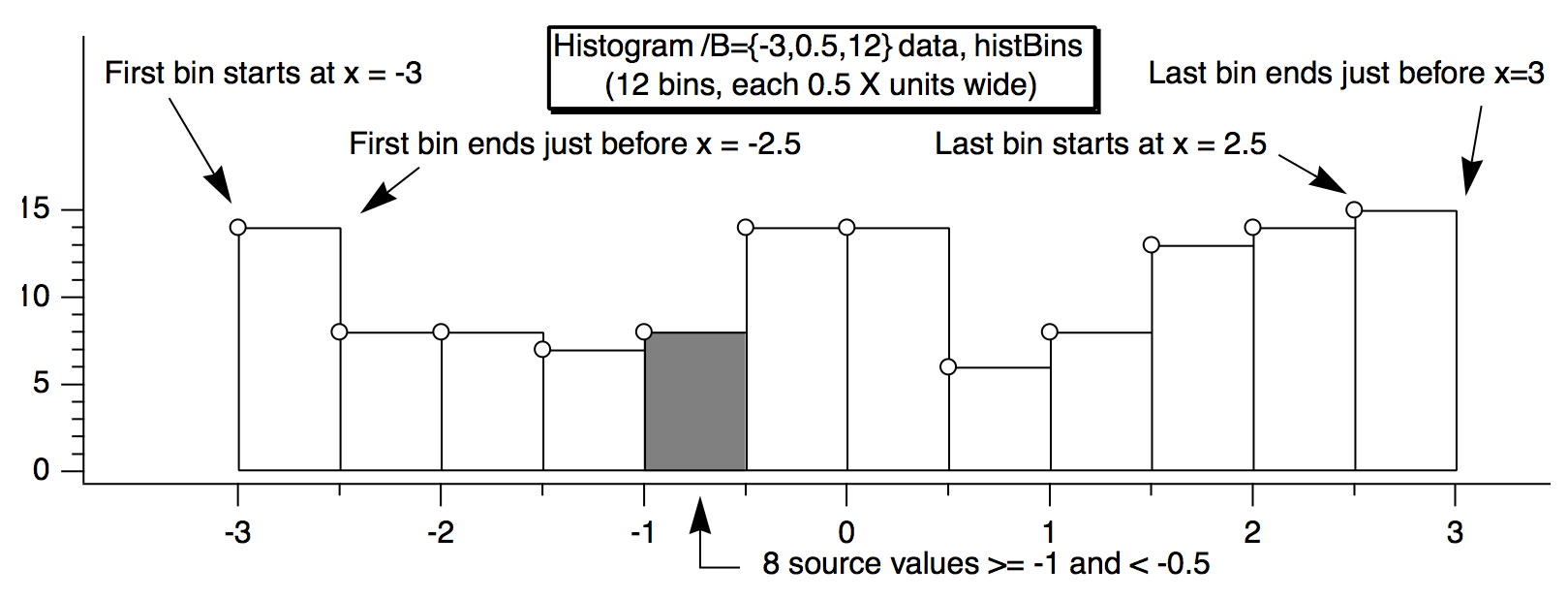

For example, this histBins destination wave has 12 points (bins), the first bin starting at -3, and each bin is 0.5 wide. The X scaling is shown in the table:

When histBins is graphed in both bars and markers modes, it looks like this:

Note that the markers are positioned at the start of the bars. You can offset the marker trace by half the bin width if you want them to appear in the center of the bin.

Alternatively, you can make a second histogram using the Bin-Centered X Values option. In the Histogram dialog, check the Bin-Centered X Values checkbox.

The Histogram Dialog

To use the Histogram operation, choose Histogram from the Analysis menu.

To use the "Manually set bins" or "Set from destination wave" bin modes, you need to decide the range of data values in the source wave that you want the histogram to cover. You can do this visually by graphing the source wave or you can use the WaveStats operation to find the exact minimum and maximum source values.

The dialog requires that you enter the starting bin value and the bin width. If you know the starting bin value and the ending bin value then you need to do some arithmetic to calculate the bin width.

A line of text at the bottom of the Destination Bins box tells you the first and last values, as well as the width and number of bins. This information can help with trial-and-error settings.

If you use the "Manually set bins" or any of the "Auto-set" modes, Igor will set the X units of the destination wave to be the same as the Y units of the source wave.

If you enable the Accumulate checkbox, Histogram does not clear the destination wave but instead adds counts to the existing values in it. Use this to accumulate results from several histograms in one destination. If you want to do this, don't use the "Auto-set bins" option since it makes no sense to change bins in mid-stream. Instead, use the "Set from destination wave" mode. To use the Accumulate option, the destination wave must be double-precision or single-precision and real.

The "Bin-Centered X Values" and "Create Square Root(N) Wave" options are useful for curve fitting to a histogram. If you do not use Bin-Centered X Values, any X position parameter in your fit function will be shifted by half a bin width. The Square Root(N) Wave creates a wave that estimates the standard deviation of the histogram data; this is based on the fact that counting data have a Poisson distribution. The wave created by this option does not try to do anything special with bins having zero counts, so if you use the square root(N) wave to weight a curve fit, these zero-count bins will be excluded from the fit. You may need to replace the zeroes with some appropriate value.

The binning modes were added in Igor Pro. In earlier versions of Igor, the accumulate option had two effects:

-

Caused Igor to not clear the destination wave

-

Caused Igor to effectively use the "Set bins from destination wave" mode

To maintain backward compatibility, the Histogram operation still behaves this way if the accumulate ("/A") flag is used and no bin ("/B") flag is used. This dialog always generates a bin flag. Thus, the accumulate flag just forces accumulation and has no effect on the binning.

You can use the Histogram operation on multidimensional waves but they are treated as though the data belonged to a single 1D wave. If you are working with 2D or 3D waves you may prefer to use the ImageHistogram operation, which computes the histogram of one layer at a time.

Histogram Example

The following commands illustrate a simple test of the histogram operation.

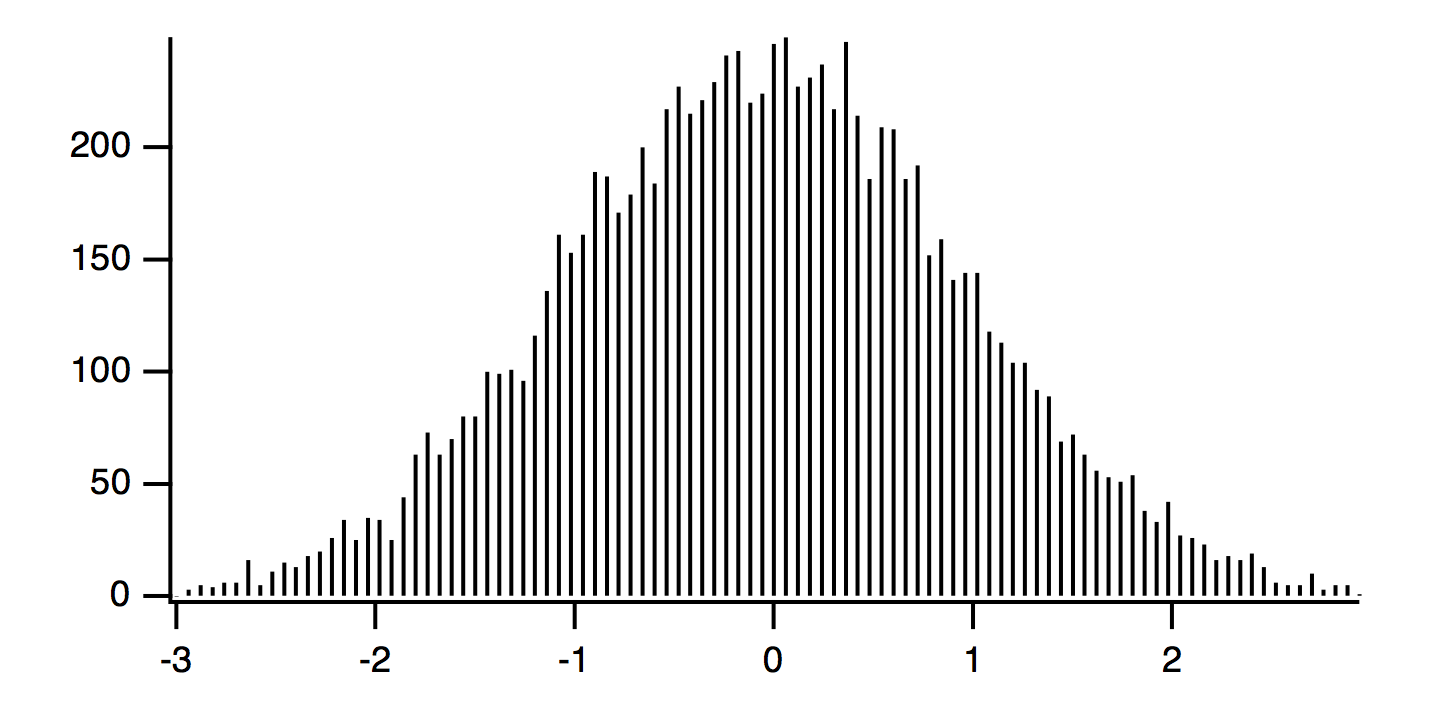

SetRandomSeed 0.2 // For reproducible randomness

Make/O/N=10000 noise = gnoise(1) // Make raw data

Make/O hist // Make destination wave

Histogram/B={-3, (3 - -3)/100, 100} noise, hist // Perform histogram

Display hist; Modify mode(hist)=1

These commands produce the following graph:

The raw data has 10,000 samples drawn from a Gaussian distribution having standard deviation of 1.0, and mean of zero. The histogram is made with 100 bins with width of 6/100 = 0.06. The amplitude of the histogram is expected to be:

A = (N*dx)/sqrt(2*pi)/sigma = (10000*0.06)/sqrt(2*pi)/1 = 239.365

The X scaling of the wave hist uses the Histogram default of the left side of the bins.

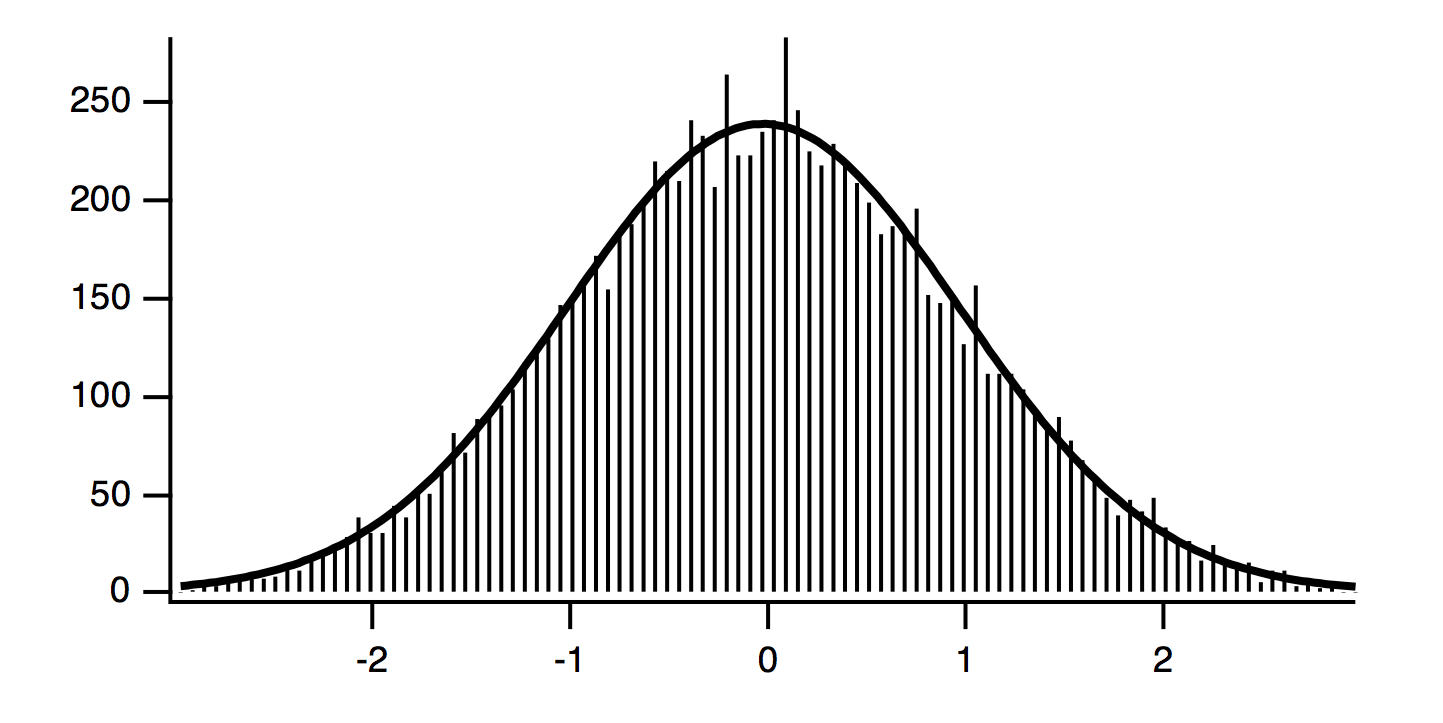

Curve Fitting to a Histogram

Because the values in the source wave are Gaussian deviates generated by the gnoise function, the histogram should have the familiar Gaussian bell-shape. You can estimate the characteristics of the population the samples were taken from by fitting a Gaussian curve to the data. First try fitting a Gaussian curve to the example histogram:

CurveFit gauss hist /D // Curve fit to histogram

The solution from the curve fit is:

y0 = -0.85917 ± 2.74

A = 238.23 ± 3.07

x0 = -0.047537 ± 0.0113

width = 1.4337 ± 0.0272

Because of the definition of Igor's built-in gauss fit function, we expect the width coefficient to be sqrt(2)*sigma or 1.4142. The coefficients from this fit are within one standard deviation of the expected values, except for peak position x0.

The peak position x0 is shifted approximately half a bin below zero. Since the gnoise function produces random numbers with mean of zero, we would expect x0 to be close to zero. The shifted value of x0 is a result of Igor's way of storing the X values for histogram bins. Setting the X value to the left edge is good for displaying a bar chart, but bad for curve fitting.

By default, a histogram wave has the X scaling set such that an X value gives the value at the left end of a bin. Usually if you are going to fit a curve to a histogram, you want centered X values. You can change the X scaling to centered values using SetScale. But it is easier to simply use the option to have the Histogram operation produce output with bin-centered X values by adding the /C flag to the Histogram command:

Histogram/C/B={-3, (3 - -3)/100, 100} noise, hist // Perform histogram

CurveFit gauss hist /D // Curve fit to histogram

The result of the curve fit is the same as before except that x0 is closer to what we expect:

y0 = -0.85917 ± 2.74

A = 238.23 ± 3.07

x0 = -0.017537 ± 0.0113

width = 1.4337 ± 0.0272

But this shifts the trace showing the histogram by half a bin on the graph. If the trace is displayed using markers or dots, this may be what is desired, but if you have used bars, the display is incorrect.

Another possibility is to make an X wave to go with the histogram data. This X wave would contain X values shifted by half a bin. Use this X wave as input to the curve fit, but don't use it on the graph:

Histogram/B={-3, (3 - -3)/100, 100} noise, hist // Histogram without /C

Duplicate hist, hist_x

hist_x = x + deltax(hist)/2

CurveFit gauss hist /X=hist_x/D

Use this method to graph the original histogram wave without modifying the X scaling, so a graph using bars is correct. It also gives a curve fit that uses the center X values, giving the correct x0. You could also use the Histogram operation twice, once with the /C flag to get bin-centered X values, and once without to get the shifted X scaling appropriate for bars. Both methods have the drawback of creating an extra wave that you must track.

This fit is not statistically correct. Because the the histogram represents counts, the values in a histogram should have uncertainties described by a Poisson distribution. The standard deviation of a Poisson distribution is equal to the square root of the mean, which implies that the estimated uncertainty of a histogram bin depends on the magnitude of the value. This in turn implies that the errors are not constant and a curve fit will give a biased solution and possibly poor estimates of the uncertainties of the fit coefficients.

This problem can be solved approximately using a weighting wave. The appropriate weighting wave is generated by the Histogram operation if you add the /N flag or turn on the Create Square Root(N) Wave checkbox in the Histogram dialog.

The next example example makes a new data set using gnoise to make gaussian-distributed values, makes a histogram with bin-centered X values and the appropriate weighting wave, and then does two curve fits, one without weighting and one with.

We expect coefficient values from the curve fit to be:

y0 = 0

A = (1024*deltax(gdata_Hist))/sqrt(2*pi)/1 = 139.863

x0 = 0

width = 1.4142

SetRandomSeed 0.5 // For reproducible randomness

Make/O/N=1024 gdata = gnoise(1)

Make/O/N=20 gdata_Hist

Histogram/C/N/B=4 gdata,gdata_Hist

Display gdata_Hist

ModifyGraph mode=3,marker=8

CurveFit gauss gdata_Hist /D

CurveFit gauss gdata_Hist /W=W_SqrtN /I=1 /D

Note the addition of "/W=W_SqrtN /I=1" addition to the second CurveFit command. This adds the weighting using the weighting wave created by the Histogram operation. Also, /B=4 was used to have the Histogram operation set the number of bins and bin range appropriately for the input data.

The results from the unweighted fit:

y0 = -3.3383 ± 2.98

A = 133.26 ± 3.51

x0 = 0.024088 ± 0.0252

width = 1.5079 ± 0.0578

And from the weighted fit:

y0 = 0.33925 ± 0.804

A = 135.21 ± 5.25

x0 = 0.0038416 ± 0.031

width = 1.3604 ± 0.0405

The results are clearly and significantly different. Since we created fake data we know what to expect the results to be; the weighted fit also is closer to our expectation.

There are some possible objections to this weighted fit, that may or may not be important to you.

The weighted fit is only an approximate solution to the fact that the actual errors follow a Poisson distribution. The truly correct way to fit count data is to do a maximum likelihood fit. Igor does not directly support maximum likelihood fitting.

When using the square root approximation of the standard deviation of the Poisson distribution, the fitted model is a better approximation of the actual counts than each individual original data points. But Igor doesn't have a way to replace the weighting based on the current iteration, so the only way to do that is to do the fit, re-compute the weighting wave and do the fit again. Repeat long enough that it doesn't change much any more.

The shape of the Poisson distribution is well-approximated by a Gaussian only for large numbers of counts. In practice, "large" may mean "more than 5 or so". In our example, only five points out on the tails have five or fewer counts, but we started with over 1000 points. You may not be so lucky!

Iterative Weighted Fit

This section is for advances users only. It provides an example of an iterative fit with corrected weighting wave.

Function FitGaussHistogram(Wave histwave, Wave InSqrtNwave)

// Make a copy so that we don't change the input wave

Duplicate/FREE InSqrtNwave, sqrtNwave

// Get a first cut at the correct fit using sqrtNwave provided by Histogram

CurveFit/Q gauss histwave /W=sqrtNwave /I=1

Wave W_coef

// Save the fit solution for comparison in the loop

Duplicate/FREE W_coef, lastCoef

// Compute the length of the initial solution to use in the stopping criterion

MatrixOp/FREE/O length = sqrt(sum(magSqr(W_coef)))

Variable initialLength = length[0]

// Now loop and re-compute the weighting wave for each iteration

// Do a new fit with the new weighting

do

// New weighting is square root of the expected Y values from the fit

sqrtNwave = sqrt(Gauss1D(W_coef, pnt2x(histwave, p)))

// Do a new fit

CurveFit/Q gauss histwave /W=sqrtNwave /I=1

Wave W_coef

// Compute the length of the difference between this fit and the last

MatrixOp/FREE/O delta = sqrt(sum(magSqr(W_coef - lastCoef)))

// Uncomment these lines if you would like to see the progress

// Print delta

// Print W_coef

// Rather arbitrary stopping criterion

if (delta[0] < 1e-8*initialLength)

break

endif

lastCoef = W_coef

while(1)

Wave W_sigma

Print "y0\t=\t",W_coef[0], "±", W_sigma[0]

Print "A\t=\t",W_coef[1], "±", W_sigma[1]

Print "x0\t=\t",W_coef[2], "±", W_sigma[2]

Print "width\t=\t",W_coef[3], "±", W_sigma[3]

End

To try it, paste the code into the procedure window, compile, and execute this on the command line:

FitGaussHistogram(gdata_Hist, W_SqrtN)

The result was

y0 = -0.985823 ± 1.22856

A = 138.462 ± 5.31568

x0 = -0.00928052 ± 0.0313763

width = 1.45437 ± 0.0521087

This result from this example is within one standard deviation of the weighted fit using the weighting wave generated by the histogram.

Computing an "Integrating" Histogram

In a histogram, each bin of the destination wave contains a count of the number of occurrences of values in the source that fell within the bounds of the bin. In an integrating histogram, instead of counting the occurrences of a value within the bin, we add the value itself to the bin. When we're done, the destination wave contains the sum of all values in the source which fell within the bounds of the bin.

Igor comes with an example experiment called "Integrating Histogram" that illustrates how to do this with a user function. To see the example, choose File→Example Experiments→Analysis→Integrating Histogram.

Sorting

The Sort operation sorts one or more 1D numeric or text waves in ascending or descending order.

The Sort operation is often used to prepare a wave or an XY pair for subsequent analysis. For example, the interp function assumes that the X input wave is monotonic.

There are other sorting-related operations: MakeIndex and IndexSort. These are used in rare cases and are described the section MakeIndex and IndexSort. The SortColumns operation sorts columns of multidimensional waves. Also see the SortList function for sorting string lists.

To use the Sort operation, choose Sort from the Analysis menu.

The sort key wave controls the reordering of points. However, the key wave itself is not reordered unless it is also selected as a destination wave in the "Waves to Sort" list.

The number of points in the destination wave or waves must be the same as in the key wave. When you select a wave from the dialog's Key Wave list, Igor shows only waves with the same number of points in the Waves to Sort list.

The key wave can be a numeric or text wave, but it must not be complex. The destination wave or waves can be text, real or complex except for the MakeIndex operation in which case the destination must be text or real.

The number of destination waves is constrained by the 2500 byte limit in Igor's command buffer. To sort a very large number of waves, use several Sort commands in succession, being careful not to sort the key wave until the very last.

By default, text sorting is case-insensitive. Use the /C flag with the Sort operation to make it case-sensitive.

Simple Sorting

In the simplest case, you would select a single wave as both the source and the destination. Then Sort would merely sort that wave.

If you want to sort an XY pair such that the X wave is in order, you would select the X wave as the source and both the X and Y waves as the destination.

Sorting to Find the Median Value

The following user-defined function illustrates a simple use of the Sort operation to find the median value of a wave.

Function FindMedian(w, x1, x2) // Returns median value of wave w

Wave w

Variable x1, x2 // Range of interest

Variable result

Duplicate/R=(x1,x2)/FREE w, medianWave // Make a clone of wave

Sort medianWave, medianWave // Sort clone

SetScale/P x 0,1,medianWave

result = medianWave((numpnts(medianWave)-1)/2)

return result

End

It is easier and faster to use the built-in median function to find the median value in a wave.

Multiple Sort Keys

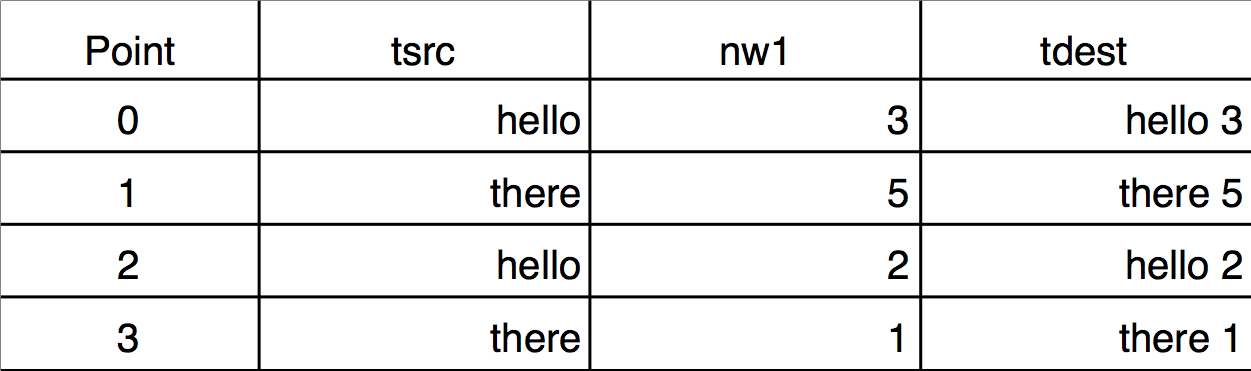

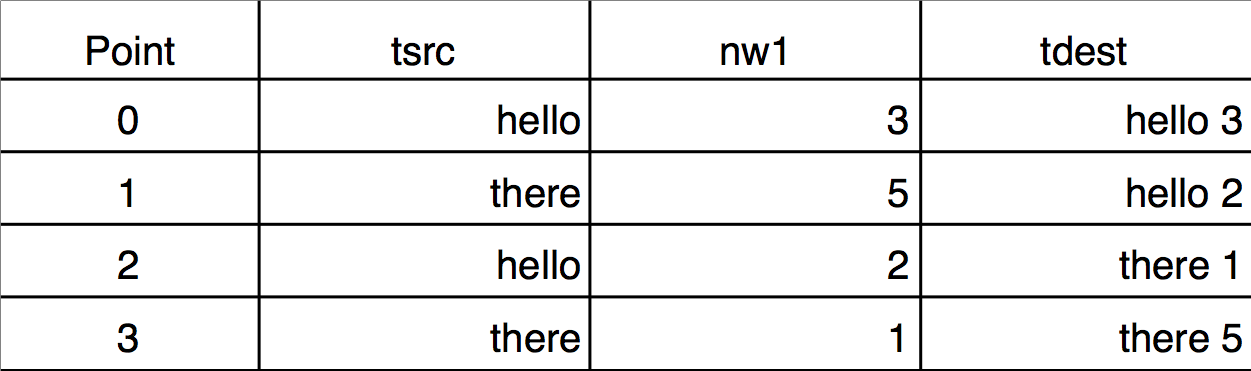

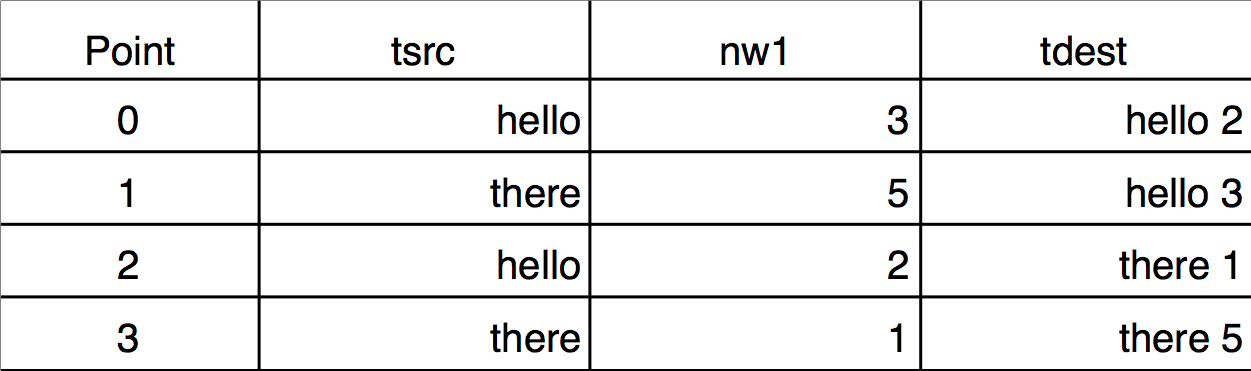

If the key wave has two or more identical values, you may want to use a secondary source to determine the order of the corresponding points in the destination. This requires using multiple sort keys. The Sorting dialog does not provide a way to specify multiple sort keys but the Sort operation does. Here is an example demonstrating the difference between sorting by single and by multiple keys. Notice that the sorted wave (tdest) is a text wave, and the sort keys are text (tsrc) and numeric (nw1):

Make/O/T tsrc={"hello","there","hello","there"}

Duplicate/O tsrc,tdest

Make nw1= {3,5,2,1}

tdest= tsrc + " " + num2str(nw1)

Edit tsrc,nw1,tdest

Single-key text sort:

Sort tsrc,tdest // nw1 not used

Execute this to scramble tdest again:

tdest= tsrc + " " + num2str(nw1)

Execute this to see a two key sort (nw1 breaks ties):

Sort {tsrc,nw1},tdest

The reason that "hello 3" sorts after "hello 2" is because nw1[0] = 3 is greater than nw1[2] = 2.

You can sort by more than two keys by specifying more than two waves inside the braces.

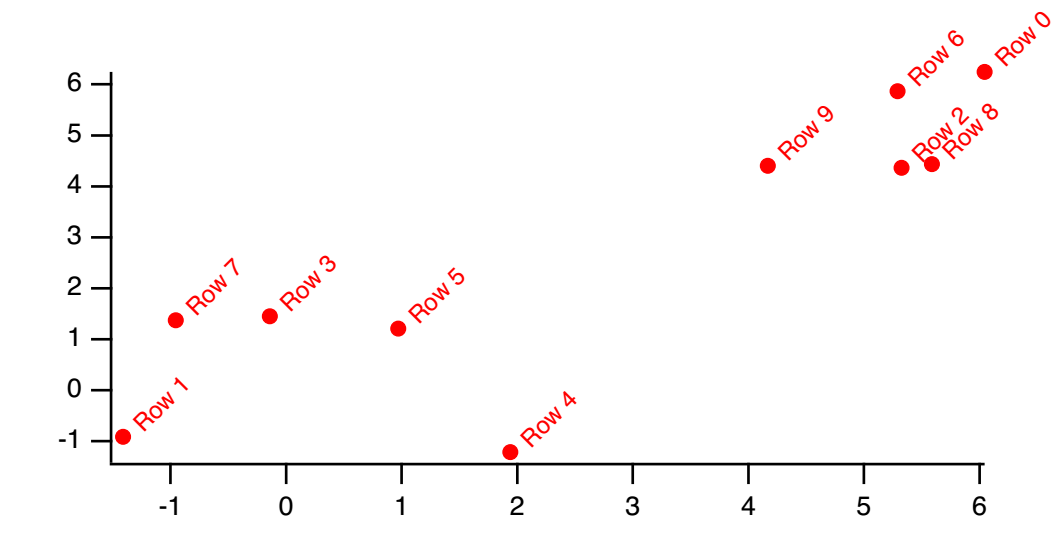

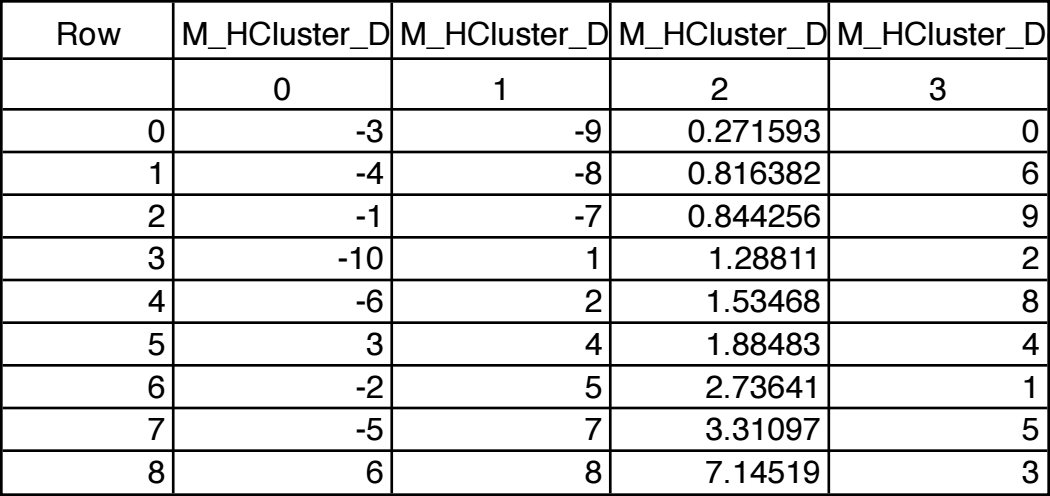

MakeIndex and IndexSort

The MakeIndex and IndexSort operations are infrequently used. You will normally use the Sort operation.

Applications of MakeIndex and IndexSort include:

-

Sorting large quantities of data

-

Sorting individual waves from a group one at a time

-

Accessing data in sorted order without actually rearranging the data

-

Restoring data to the original ordering

The MakeIndex operation creates a set of index numbers. IndexSort can then use the index numbers to rearrange data into sorted order. Together they can be used to sort just like the Sort operation but with an extra wave and an extra step.

The advantage is that once you have the index wave you can quickly sort data from a given set of waves at any time. For example, if you have hundreds of waves you cannot use the normal sort operation on a single command line. Also, when writing procedures it is sometimes more convenient to loop through a set of waves one at a time than to try to generate a single command line with multiple waves.

You can also use the index values to access data in sorted order without using the IndexSort operation. For example, if you have data and index waves named wave1 and wave1index, you can access the data in sorted order on the right hand side of a wave assignment like so:

wave1[wave1index[p]]

Like the Sort operation, the MakeIndex operation can handle multiple sort keys.

The output wave from MakeIndex contains indices which can be used to access the elements of the input wave in sorted order. These indices can be used later with IndexSort to sort the input wave and/or to reorder other waves. For example:

Make/O data0 = {1,9,2,3}

Make/O/N=4 index

MakeIndex data0, index

Print index // Prints 0, 2, 3, 1

The output values 0, 2, 3, and 1 mean that, to sort data0, you would access element 0, then element 2, then element 3, then element 1. For example, this prints the values of data0 in order:

Print data0[0], data0[2], data0[3], data0[1]

You can now apply that sequence of access using IndexSort:

IndexSort index, data0

Print data0 // Prints 1, 2, 3, 9

You can apply the same reordering to another wave using IndexSort:

Make/O data1 = {0,1,2,3}

IndexSort index, data1

Print data1 // Prints 0, 2, 3, 1

Decimation

If you have a large data set it may be convenient to deal with a smaller but representative number of points. In particular, if you have a graph with millions of points, it probably takes a long time to draw or print the graph. You can do without many of the data points without altering the graph much. Decimation is one way to accomplish this.

There are at least two ways to decimate data:

-

Keep only every nth data value. For example, keep the first value, discard 9, keep the next, discard 9 more, etc. We call this Decimation by Omission.

-

Replace every nth data value with the result of some calculation such as averaging or filtering. We call this Decimation by Smoothing.

Decimation by Omission

To decimate by omission, create the smaller output wave and use a simple Waveform Arithmetic and Assignment statement to set their values. For example, If you are decimating by a factor of 10 (omitting 9 out of every 10 values), create an output wave with 1/10th as many points as the input wave.

For example, make a 1000 point test input waveform:

Make/O/N=1000 wave0

SetScale x 0, 5, wave0

wave0 = sin(x) + gnoise(.1)

Now, make a 100 point waveform to contain the result of the decimation:

Make/O/N=100 decimated

SetScale x 0, 5, decimated // preserve the x range

decimated = wave0[p*10] // for(p=0;p<100;p+=1) decimated[p]= wave0[p*10]

Decimation by omission can be obtained more easily using the Resample operation and dialog by using an interpolation factor of 1 and a decimation factor of (in this case) 10, and a filter length of 1.

Duplicate/O wave0, wave0Resampled

Resample/DOWN=10/N=1 wave0Resampled

Decimation by Smoothing

While decimation by omission completely discards some of the data, decimation by smoothing combines all of the data into the decimated result. The smoothing can take many forms: from simple averaging to various kinds of lowpass digital filtering.

The simplest form of smoothing is averaging (sometimes called "boxcar" smoothing). You can decimate by averaging some number of points in your original data set. If you have 1000 points, you can create a 100 point representation by averaging every set of 10 points down to one point. For example, make a 1000 point test waveform:

Make/O/N=1000 wave0

SetScale x 0, 5, wave0

wave0 = sin(x) + gnoise(.1)

Now, make a 100 point waveform to contain the result of the decimation:

Make/O/N=100 wave1

SetScale x 0, 5, wave1

wave1 = mean(wave0, x, x+9*deltax(wave0))

Notice that the output wave, wave1, has one tenth as many points as the input wave.

The averaging is done by the waveform assignment

wave1 = mean(wave0, x, x+9*deltax(wave0))

This evaluates the right-hand expression 100 times, once for each point in wave1. The symbol "x" returns the X value of wave1 at the point being evaluated. The right-hand expression returns the average value of wave0 over the segment that corresponds to the point in wave1 being evaluated.

It is essential that the X values of the output wave span the same range as the X values of the input range. In this simple example, the SetScale commands satisfy this requirement.

Results similar to the example above can be obtained more easily using the Resample operation and dialog.

Resample is based on a general sample rate conversion algorithm that optionally interpolates, low-pass filters, and then optionally decimates the data by omission. The lowpass filter can be set to "None" which averages an odd number of values centered around the retained data points. So decimation by a factor of 10 would involve averaging 11 values centered around every 10th point.

The decimation by averaging above can be changed to be 11 values centered around the retained data point instead 10 values from the beginning of the retained data point this way:

Make/O/N=100 wave1Centered

SetScale x 0, 5, wave1Centered

wave1Centered = mean(wave0, x-5*deltax(wave0), x+5*deltax(wave0))

Each decimated result (each average) is formed from different values than wave1 used, but it isn't any less valid as a representation of the original data.

Using the Resample operation:

Duplicate/O wave0, wave2

Resample/DOWN=10/WINF=None/N=11 wave2 // no /UP means no interpolation

gives nearly identical results to the wave1Centered = mean(...) computation, the exceptions being only the initial and final values, which are simple end-effect variations.

The /WINF and /N flags of Resample define simple low-pass filtering options for a variety of decimation-by-smoothing choices. The default /WINF=Hanning window gives a smoother result than /WINF=None. See WindowFunction operation for more about these window options.

See Multidimensional Decimation for a discussion of decimating 2D and higher dimension waves.

Miscellaneous Operations

Using WaveTransform

When working with large amounts of data (many waves or multiple large waves), it is frequently useful to replace various wave assignments with wave operations which execute significantly faster. The WaveTransform operation is designed to help in these situations. For example, to flip the data in a 1D wave you can execute the following code:

Function FlipWave(inWave)

wave inWave

Variable num=numPnts(inWave)

Variable n2=num/2

Variable i,tmp

num-=1

Variable j

for(i=0;i<n2;i+=1)

tmp=inWave[i]

j=num-i

inWave[i]=inWave[j]

inWave[j]=tmp

endfor

End

You can obtain the same result much faster using the command:

WaveTransform/O flip, waveName

In addition to "flip", WaveTransform can also fill a wave with point index or the inverse point index, shift data points, normalize, convert to complex-conjugate, compute the squared magnitude or the phase, etc.

For multi-dimensional waves, use MatrixOp instead of WaveTransform. See Using MatrixOp for details.

The Compose Expression Dialog

The Compose Expression item in the Analysis menu brings up the Compose Expression dialog.

This dialog generates a command which sets the value of a wave, variable or string based on a numeric or string expression created by pointing and clicking. Any command that you can generate using the dialog could also be typed directly into the command line.

The command that you generate with the Compose Expression dialog consists of three parts: the destination, the assignment operator and the expression. The command resembles an equation and is of the form:

<destination> <assignment-operator> <expression>

For example:

wave1 = K0 + wave2 // a wave assignment command

K0 += 1.5 * K1 // a variable assigment command

str1 = "Today is" + date() // a string assignment command

Table Selection Item

The Destination Wave pop-up menu contains a "_table selection_" item. When you choose "_table selection_", Igor assigns the expression to whatever is selected in the table. This could be an entire wave or several entire waves, or it could be a subset of one or more waves.